Submitting an app

This page describes how to run a first Spark application programmatically through Data Mechanics API.

The Data Mechanics gateway lives in your Kubernetes cluster and exposes a URL that has been shared with you during the initial setup of the platform.

Let's call it https://<your-cluster-url>/.

The Data Mechanics API is exposed at https://<your-cluster-url>/api/. You can run, configure, and monitor applications using the different endpoints available.

To know more about the API routes and parameters, check out the API reference.

Important Note

You need to call spark.stop() at the end of your application code, where spark can be your Spark session or Spark context. Otherwise your application may keep running indefinitely.

For now, let's focus on running a first application!

The command below will run the Monte-Carlo Pi computation contained in all Spark distributions:

Here's a breakdown of the payload:

- We assign the

jobName"sparkpi2" to the application. A job is a logical grouping of applications. It is typically a scheduled workload that runs every day or every hour. Every run of a job is called an application in Data Mechanics. In the dashboard, the Jobs view lets you track performance of jobs over time. A uniqueappNamewill be generated from thejobName. - Default configurations are overriden in

configOverrides:- This is a Scala application running Spark

3.0.0. - The command to run is specified by

mainApplicationFile,mainClass, andarguments. - We override the default configuration and request 2 cores per executor.

- This is a Scala application running Spark

The API then returns something like:

Note that some additional configurations are automatically set by Data Mechanics.

In particular, the appName is a unique identifier of this Spark application on your cluster.

Here it is has been generated automatically from the jobName, but you can set it yourself in the payload of your request to launch an app.

When the appName is automatically generated, it uses the following pattern: {job_name}-{YYYYMMDD}-{HHmmSS}-{word}.

Beside the

appName, Data Mechanics has also set some defaults to increase the stability and performance of the app. Learn more about configuration management and auto-tuning.

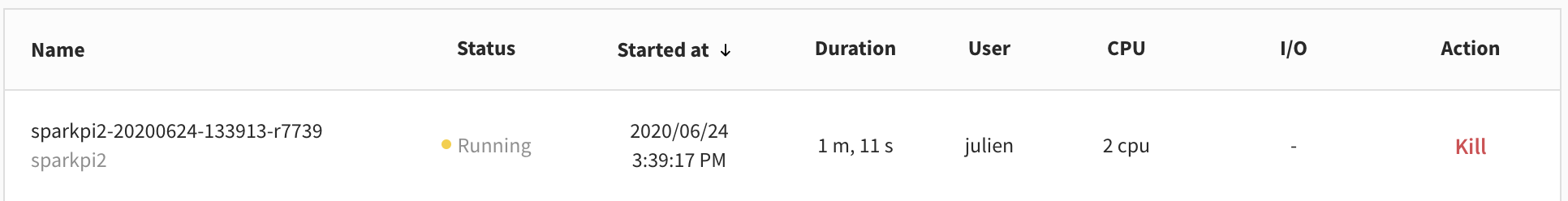

The running application should automatically appear in the dashboard at https://<your-cluster-url>/dashboard/:

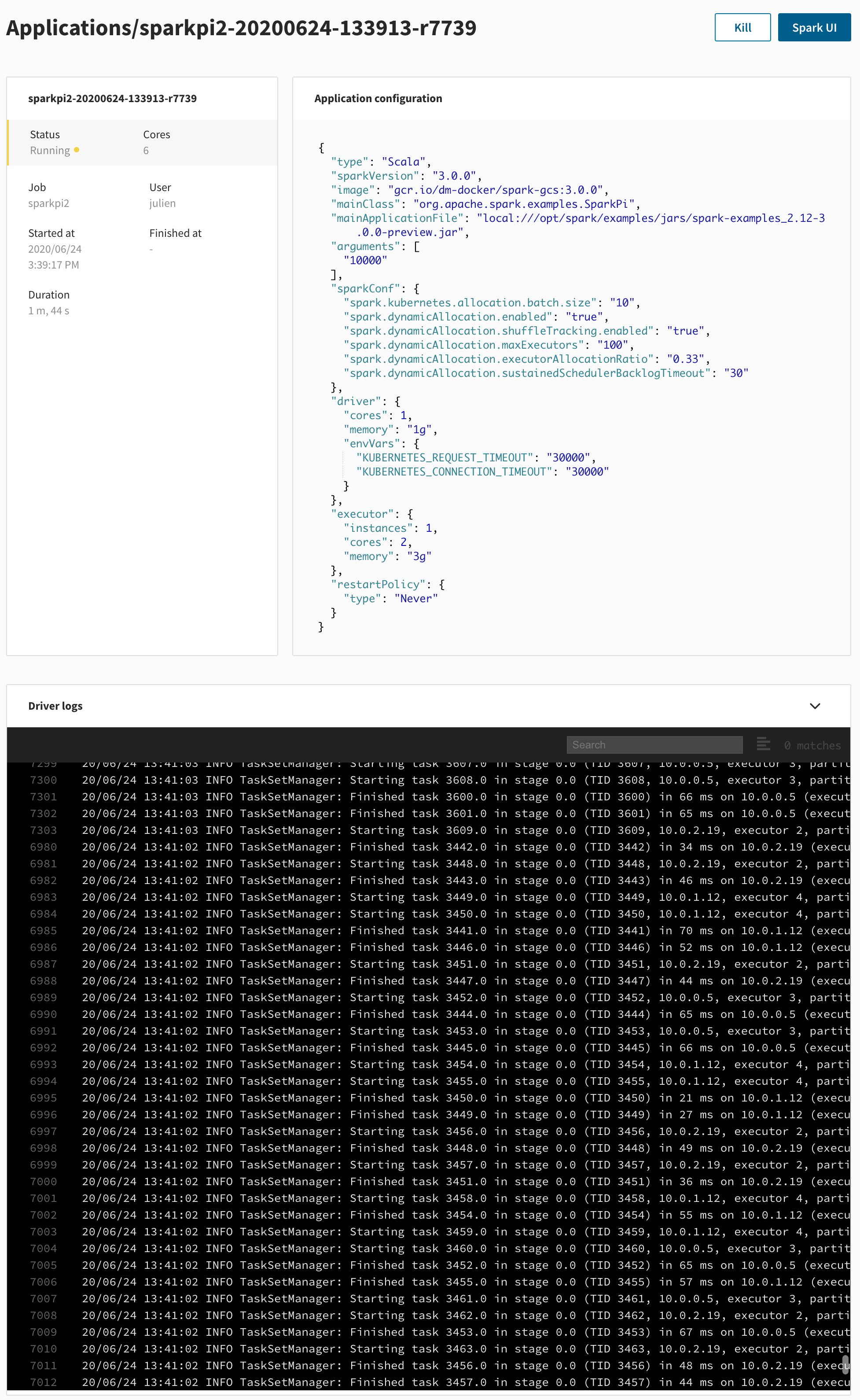

Clicking on the application opens the application page. At this point, you can open the Spark UI, follow the live log stream, or kill the app.

This example uses a JAR embedded in the Spark Docker image and neither reads nor writes data. For a more real-world use case, learn how to Access your own data.